Fine-tuning is the process of taking a pre-trained foundation model and adapting it to perform better on a specific task, dataset, or domain. Instead of training a model from scratch—which requires massive datasets, compute resources, and time—fine-tuning starts with a model that already has general-purpose knowledge and then refines it with smaller, targeted datasets.

This approach makes AI more practical and cost-effective for businesses. A fine-tuned model can capture domain-specific terminology, adapt to industry workflows, and provide more relevant outputs than a generic foundation model. From biotech research to financial services, fine-tuning helps transform broad AI capabilities into specialized, production-ready tools.

In the sections below, we’ll examine the process of fine-tuning, where it fits in the AI model lifecycle, and how it’s used by teams and companies today.

How fine-tuning works

Fine-tuning builds on the foundation of a pre-trained model by adjusting its parameters with a smaller, domain-specific dataset. Instead of re-learning everything from scratch, the model updates only certain layers or weights to specialize in new tasks while retaining its general-purpose knowledge.

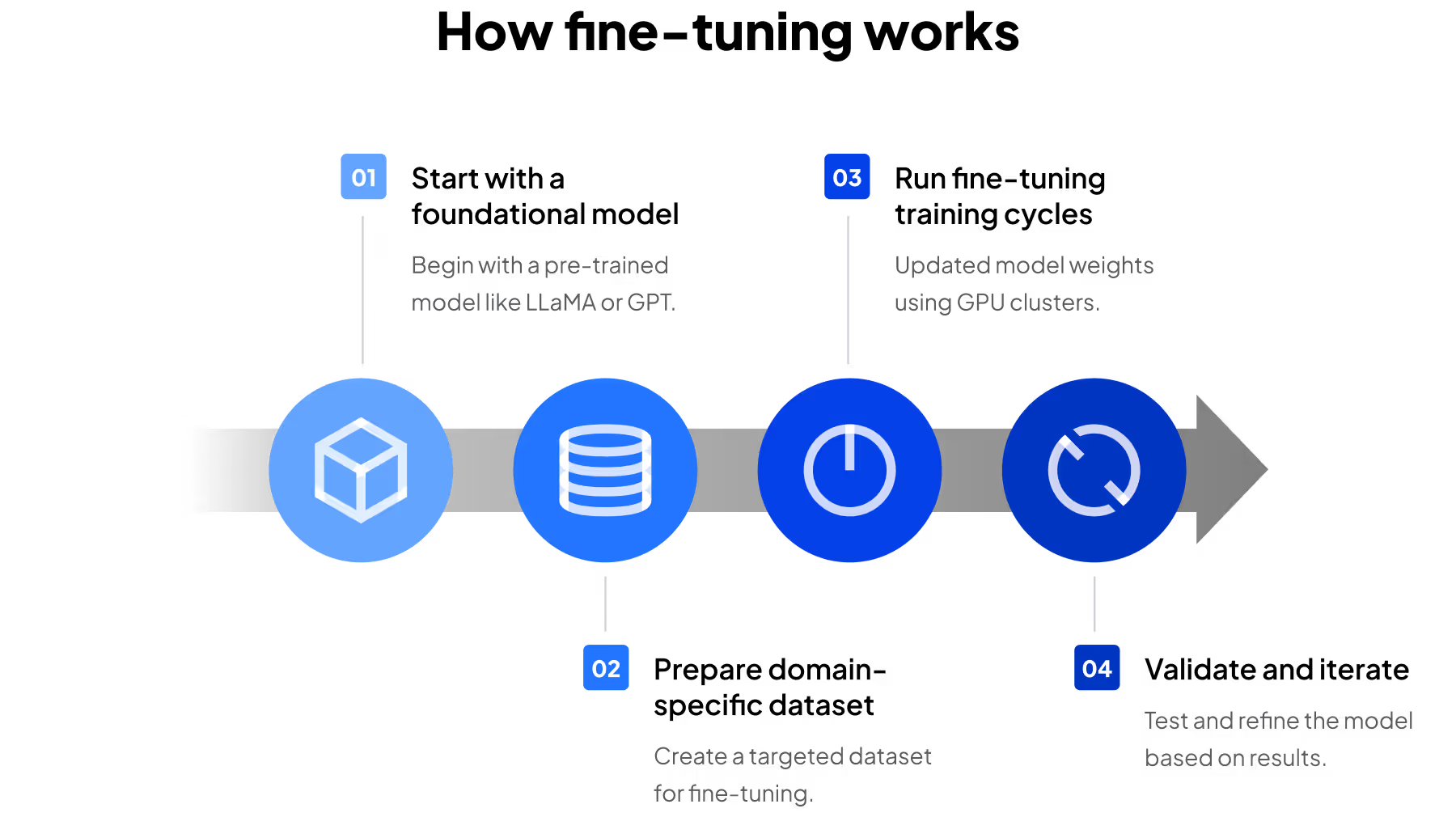

The process typically involves:

- Start with a foundation model: such as LLaMA, Mixtral, or GPT variants that have already been trained on broad, diverse datasets

- Prepare a domain-specific dataset: smaller and more targeted than pretraining data, often consisting of thousands to hundreds of thousands of documents or examples

- Run fine-tuning training cycles: where the model’s weights are updated on GPU clusters. Depending on the method, this might involve adjusting all model parameters (full fine-tuning) or only a subset (parameter-efficient fine-tuning)

- Validate and iterate: testing the adapted model against benchmarks or real-world queries, then repeating the process as data and business needs evolve

Modern techniques like LoRA (Low-Rank Adaptation) and QLoRA (Quantized LoRA) make fine-tuning more efficient by updating only a small fraction of the model’s parameters. These approaches reduce GPU memory requirements and training costs, allowing enterprises to fine-tune large models with fewer resources.

At a technical level, fine-tuning is about shifting the model’s probability distributions so that it prioritizes outputs aligned with your data and use cases. At a business level, it’s about turning a general-purpose foundation model into a tool that speaks your organization’s language.

Fine-tuning in the AI model lifecycle

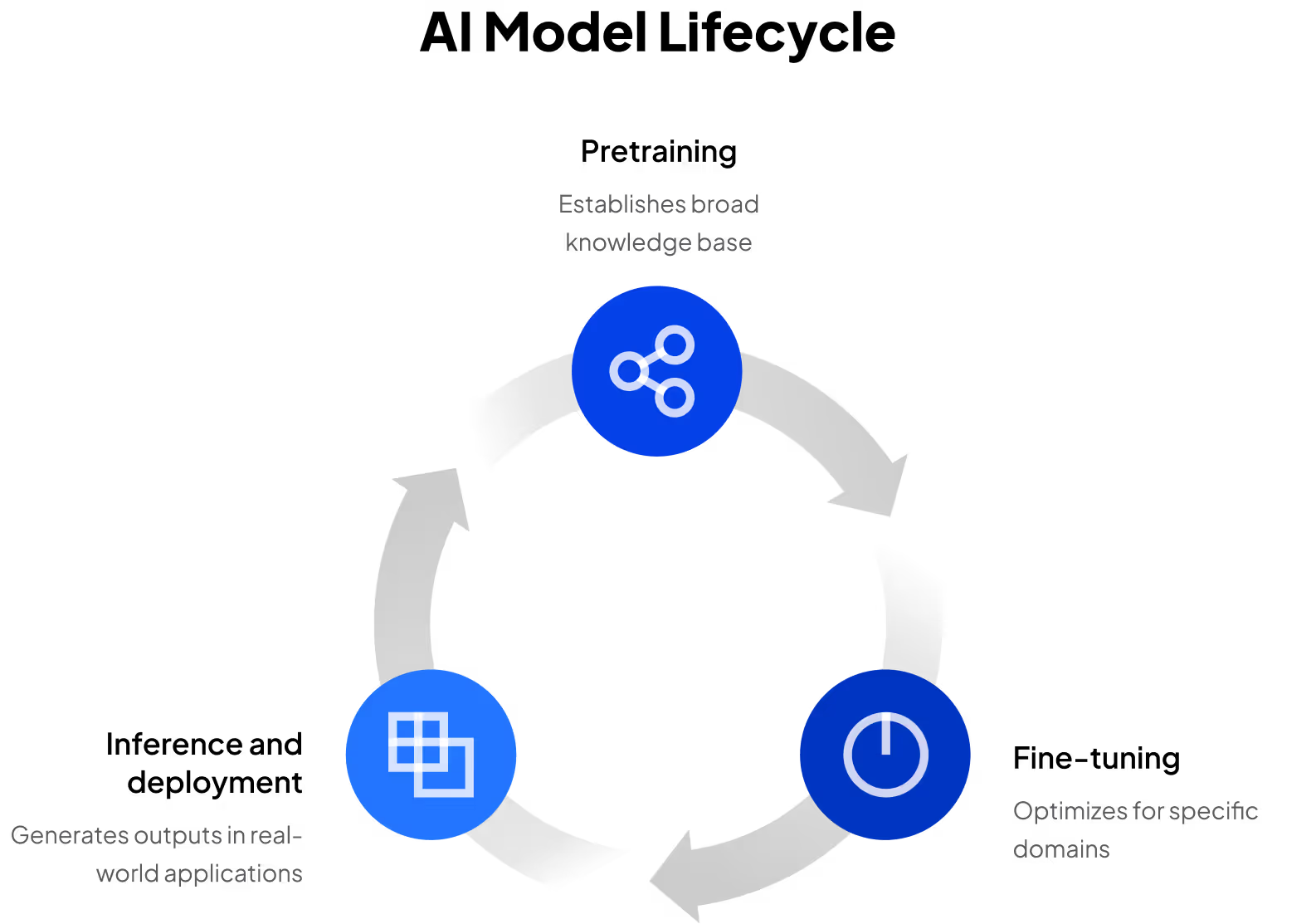

At the very highest level, every AI model follows a lifecycle: pretraining → fine-tuning → inference and deployment. This simple progression highlights how models move from broad general-purpose learning to domain specialization, and finally into production use.

- Pretraining establishes the model’s broad knowledge base by learning from massive, diverse datasets. Inference and deployment bring the model into the real world, where it generates outputs in response to user queries or business applications.

- Fine-tuning sits at the center of this lifecycle. It bridges the gap between a general-purpose foundation model and a domain-optimized solution, making it one of the most critical steps in delivering business value.

- Inference and deployment bring the ML model into the real world, where it generates outputs in response to user queries or business applications. The performance and scalability of inference are just as critical as pretraining and fine-tuning, since this is where users interact with the model daily.

However, this process is much more circular in reality. Fine-tuning is not a one-time event; it’s often repeated over time as new data emerges, regulations shift, or business requirements evolve. Monitoring and governance during deployment introduces new data and logic to the model, which can trigger additional fine-tuning or the training of a new model. This ongoing process ensures models remain accurate, relevant, and competitive.

Not every company follows this lifecycle in a strict, linear fashion. Some start by fine-tuning a foundation model to gain domain-specific intelligence quickly. Others may skip directly to deployment by using retrieval-augmented generation (RAG), which augments a foundation model with external data instead of retraining it.

While certain organizations eventually invest in pretraining their own proprietary models for maximum control, many continue to rely on fine-tuning and inference as their primary levers for delivering AI value.

Pretraining vs. fine-tuning

When adapting AI for business, companies often weigh the choice between training a model from scratch (pretraining) or fine-tuning an existing foundation model. Both approaches have their place, but they differ significantly in cost, complexity, and accessibility.

Fine-tuning is generally the more approachable path for companies looking to make AI useful in production without the massive overhead of pretraining. It allows organizations to adapt powerful foundation models to their unique needs, striking a balance between cost, control, and performance.

Real-world fine-tuning use cases

Fine-tuning turns a general-purpose model into a domain-optimized solution that’s practical for enterprise deployment. Instead of starting from scratch, companies can take advantage of existing foundation models and refine them with their own data, making AI adoption faster and more achievable.

Key benefits of fine-tuning include:

- Cost efficiency: much less expensive than training a model from scratch, while still providing meaningful customization

- Domain expertise: captures industry-specific terminology, data formats, and workflows that foundation models alone often miss

- Faster time-to-value: adaptation cycles can take weeks instead of months or years, accelerating AI deployment

- Data privacy and control: proprietary datasets remain in-house, helping organizations protect sensitive or regulated information

- Improved performance: fine-tuned models typically outperform generic models on narrow, specialized tasks

These benefits come to life through a variety of different use cases and industries.

Biotech and pharmaceutical research

Drug discovery teams generate decades of research data, often in niche formats and specialized terminology. By fine-tuning models like LLaMA or Mixtral with internal documentation, pharmaceutical companies have built AI assistants that can interpret lab notes, recognize rare molecule names, and surface insights more quickly than generic models. The result: accelerated research and development cycles, improved collaboration, and protection of valuable intellectual property.

Financial services

Trading desks and risk management teams rely on proprietary datasets that are unavailable to the public. Fine-tuning enables hedge funds, banks, and insurers to adapt models to their internal market signals, regulatory documents, and compliance frameworks. Compared to off-the-shelf models, fine-tuned systems provide sharper forecasting, faster decision-making, and a competitive edge—all while keeping sensitive data private.

Enterprise IT and operations

Large enterprises use fine-tuning to power internal tools like knowledge assistants, IT helpdesk automation, and industry-specific copilots. By refining a foundation model on company documentation, workflows, and compliance requirements, organizations reduce employee search time, improve productivity, and ensure outputs are tailored to their business. For many teams, fine-tuning is the step that turns a generalized model into a true enterprise-ready solution.