We hope you enjoy the session!

On-demand webinar

Real Cloud Infrastructure for Real AI Workloads: Training and Inference at Production Scale

What you’ll learn in this on-demand session

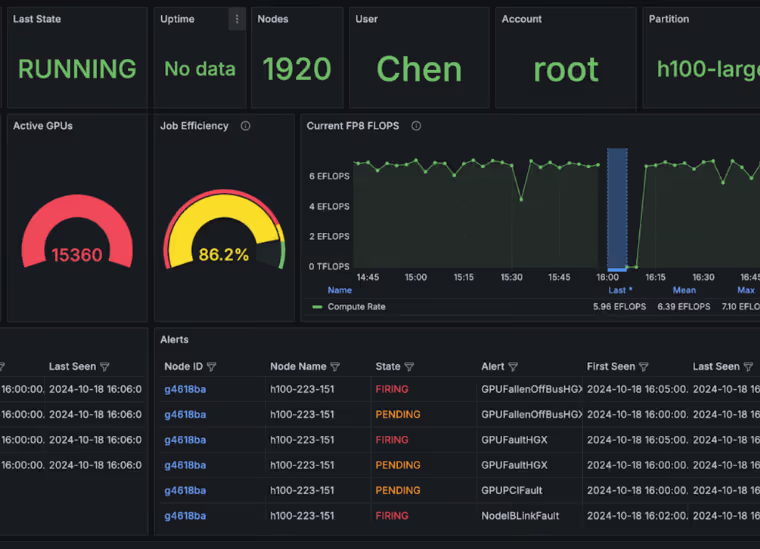

In this session, CoreWeave detailed how we optimized every layer of the AI stack—from infrastructure to orchestration to observability—to efficiently run large-scale training and inference workloads. We also examined the architectural breakthroughs that enabled rack-scale systems to operate with ultra-low latency and high reliability.

These were the essential cloud components that powered the next generation of agentic AI. The question was: How did your infrastructure stack up?

We cover:

- How infrastructure requirements changed when scaling to trillion-parameter and mixture-of-experts models

- How full-stack optimization across infrastructure, orchestration, and observability improved performance and efficiency

- Architectural innovations that enabled ultra-low latency, rack-scale AI systems

- Best practices for running production-grade AI workloads, including agentic AI systems

Speakers

Visionary insights for those building what’s next

Get the latest CoreWeave updates, AI breakthroughs, and insider perspectives from pioneers shaping the next generation of innovation.

%252520(4).avif)