Optimize workloads for seamless auto-scaling and cost savings with serverless Kubernetes.

Wouldn’t it be nice to not have to think about infrastructure—and just focus on what you do best: coding, designing, and building world-changing products?

Many developers are jumping to serverless for that very reason. However, many developers still rely on Kubernetes to manage containerized deployments for its scalability, control, and reliability.

But what if you could integrate the benefits of serverless and Kubernetes into one fast and reliable computing architecture? Enter, serverless Kubernetes.

Let’s examine what serverless Kubernetes is, its benefits and drawbacks, and what real-world use cases would gain from it.

Get started: Already a CoreWeave client? Learn how to deploy serverless applications on CoreWeave Cloud today.

What is a serverless Kubernetes service?

Serverless Kubernetes is a deployment framework for container management in the cloud in which you get the benefits of serverless architecture (not having to manage infrastructure) with the fast, reliable performance of Kubernetes.

Over the past few years, Kubernetes has become the go-to tool for managing large groups of containers and mature deployments. Kubernetes efficiently manages resource allocation so that you only use what you need. You don’t need servers to stay idle when there is no compute needed. However, its complexity requires companies to have in-house experts who can continuously run and maintain the Kubernetes platform—putting this incredible tool out of reach for many companies.

With serverless Kubernetes, developers run their applications as if in a serverless model while still enjoying the benefits of a bare-metal Kubernetes platform. A cloud provider sets up your Kubernetes cluster and manages the resources on a single orchestration layer.

Despite the name, “serverless” doesn’t mean there’s no server. (There has to be a server.) “Serverless” implies that developers don’t have to worry about the server.

Serverless architecture allows developers to focus on their code while a third-party cloud provider provisions, maintains, and secures the hardware. It’s scalable and often costs less than Kubernetes or traditional deployments because you only pay for the compute resources you use.

In that sense, serverless is a sub-set of what Kubernetes has to offer. Serverless allows a user to deploy an app (like an AI text-to-image app) to a lot of customers without worrying too much if a ton of users suddenly show up since serverless handles autoscaling and traffic routing appropriately. Here’s a more technical comparison of how serverless Kubernetes compares to a traditional Kubernetes setup.

Kubernetes:

- you spin up a pod to run code

- you need to specify the OS

- scale down to 1 (the minimum for a pod)

Serverless Kubernetes:

- with knative, you can spin up a pod that only runs your code

- no need to specify the host OS that the pod will run on

- scale down to zero

Is serverless Kubernetes the same as managed Kubernetes?

No.

Think of your hardware framework like your home. Serverless Kubernetes is your luxury apartment where you only pay for the utilities you use. Your cloud provider owns the apartment building (the overarching K8s), and you live in your unique apartment (a tenant cluster with a namespace). You can focus on your code while your cloud provider builds and maintains your infrastructure. And, you only pay for the compute resources you use (aka, no service fee).

Serverless Kubernetes does provide some flexibility. You can use your own tech stack on top of it as long as you package it and utilize Kubernetes infrastructure and plumbing to connect your applications, services, storage, and more. Again, think of renting; as long as you’re not structurally changing the building itself (the overarching K8s), it’s fine.

In a managed Kubernetes environment, you’re paying someone else to build you a house. Like building a home from scratch, there’s a large variation among providers in management responsibilities and fees. You’re still locked into the tech stack of your provider, and you pay for the compute resources you use. But, you have more configuration and control over what your cluster will look like—and you pay for that control, plus any additional support you need.

There’s also a third scenario: those who manage and maintain their own Kubernetes environment within a third-party’s cloud platform. Unlike the managed Kubernetes service, you’re responsible for building your own house and all the management and maintenance that comes with it. This gives you a ton of control, but you’re still building on someone else’s land (using someone else’s hardware). And, you carry most of the engineering cost and overhead.

Benefits of a serverless Kubernetes environment

In truth, many companies don’t need to configure their own K8s clusters. (And unlike our example, there’s no promise that the Kubernetes home you built will retain its value over time.)

Many companies and developers care more about having access to incredible hardware and being able to host their serverless applications. This is especially true for compute-intensive workloads, like model training, inference, batch simulations, real-time streaming, and rendering. Serverless Kubernetes provides that in an efficient environment.

Unmatched performance, no infrastructure overhead

Many companies, especially start-ups, don’t have the time and resources to manage Kubernetes on their own. With serverless, they can take advantage of the speed and performance of a Kubernetes environment without the overhead costs.

Price: Pay-as-you-go

Serverless Kubernetes is priced the same way as serverless architecture: you only pay for what you use. No service or support fees. Just equitable pricing on the top-performing hardware.

Easy auto-scaling

Leverage the top hardware that fits your workload needs—when you need it. Serverless Kubernetes features responsive auto-scaling, so you always have access to the resources you need. In the same cluster. Instantly.

Integration with apps

Serverless Kubernetes allows users to deploy applications directly from an app catalog. This enables developers to tweak code at a relatively fine-grained level (without needing to know anything very Kubernetes-specific) as the cloud provider exposes application configurations (YAML tab).

What types of projects run well on serverless Kubernetes?

Compute-intensive workloads thrive in serverless Kubernetes environments. Innovative technological advancements work with containerized workloads because Kubernetes is well-equipped to handle data-heavy and complex workloads.

Paired with the responsiveness of serverless architecture, developers can easily spin up and down applications without concerns about hardware access. This way, if your model sees a sudden spike in usage, the serverless K8s can handle it.

Best use-cases for serverless Kubernetes:

- Inference serving

- Distributed training

- Batch simulations

- Rendering

- And more

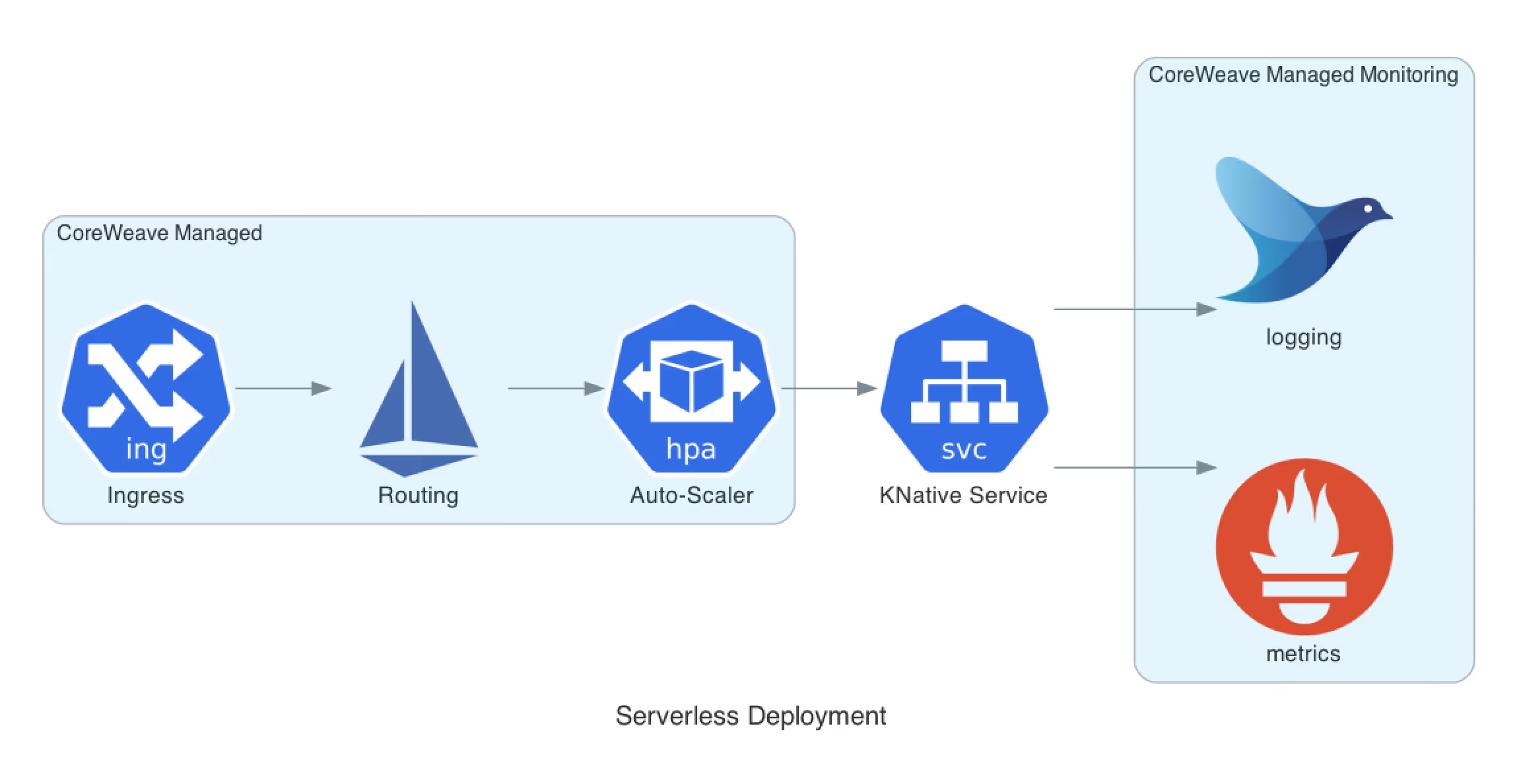

How serverless Kubernetes is intertwined into the entire CoreWeave Cloud

The CoreWeave Cloud is Kubernetes-native, designed to give you the performance advantages of bare-metal without the infrastructure overhead. Our platform was built to make the experience as seamless as possible and to provide the best performance. Clients can spin up new instances in seconds with responsive auto-scaling across thousands of GPUs.

Here’s a closer look at how CoreWeave Cloud compares to other providers.

1. Best-in-class hardware for fast spin-up times

We provide the industry’s broadest range of NVIDIA GPUs, highly configurable and highly available. Thanks to our partnership with NVIDIA, Intel, and other industry leaders, we can bring our clients the latest technology as soon as it’s made available—like NVIDIA HGX H100s.

Our unique networking also makes this solution special. CoreWeave Cloud’s network architecture is designed to handle 100Tbps+, and its decentralized design provides endless horizontal scaling of your workloads. And thanks to container image caching and specialized schedulers, your workload can be up and running in as little as 5 seconds. That’s fast, on fast, on fast.

2. Greater performance drives incomparable economics

Our pricing model is transparent, simple, and radically different from other large cloud providers. You truly only pay for the compute resources you use. We don’t charge for data transfers or egress.

When it comes to pricing, our infrastructure is what drives cost savings for our clients. Thanks to the bare-metal efficiency of our platform, clients find the cost of running on CoreWeave’s serverless Kubernetes infrastructure to be 50-80% less expensive than other major cloud providers.

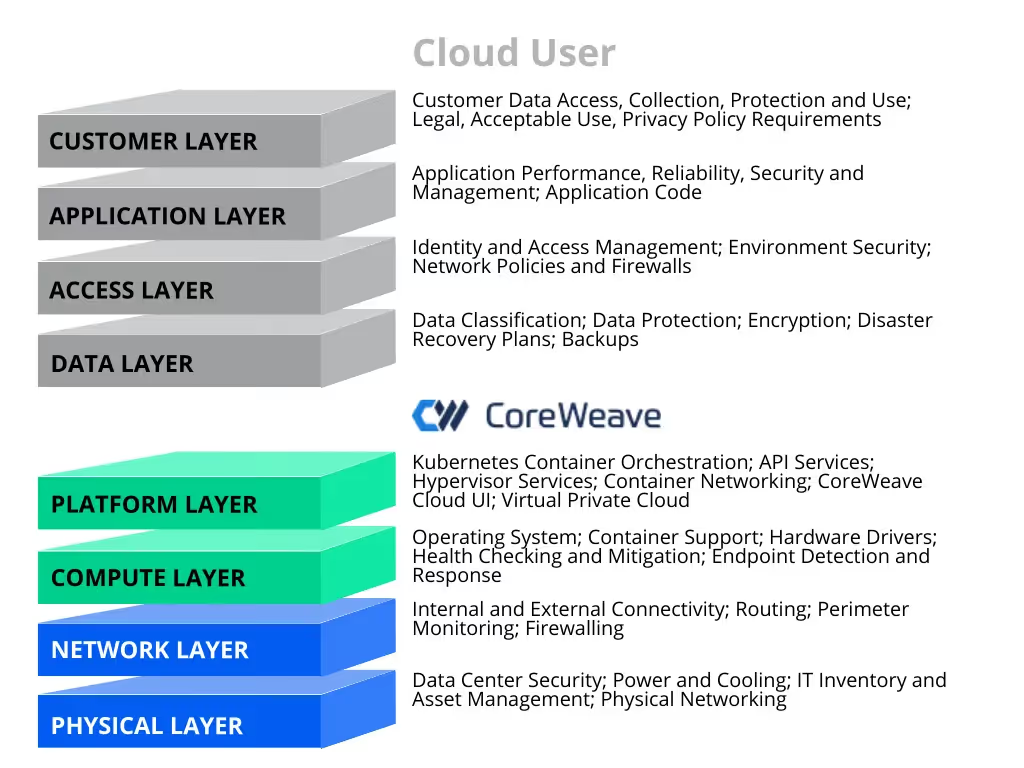

3. Fully managed, serverless Kubernetes

While other cloud providers will only cover the Network and Physical layer, CoreWeave manages all the control-plane infrastructure, cluster operations, and platform integrations. That’s less work our clients need to do to get up and running and less time spent maintaining the health and safety of their cluster.

This doesn’t mean clients give up all configuration and customization. When management of a Layer 2 environment (the data link layer) is required, CoreWeave Virtual Private Cloud (VPC) returns networking control to the user. This allows you to deploy a virtual firewall, terminate IPSec VPNs, manage routing, control IP addresses, and more.

Better networking. Blazing-fast bare-metal. Reliable performance. And greater cost-efficiency for your projects. Let us focus on the infrastructure, so you can spend more time on what matters most: building your innovations. Want to learn more about serverless Kubernetes? Talk with an expert from our team today.

.avif)